Cloud technology has made data engineering faster, easier, and highly scalable. Today, teams can build powerful data pipelines in days instead of months.

But this speed comes with a hidden challenge: cloud costs that quietly grow until they become a serious concern.

Many data projects begin small and affordable. Then data grows, pipelines run more frequently, and storage keeps expanding. Suddenly, the monthly bill looks nothing like the original estimate.

And everyone asks the same question:

“How did it get this expensive?”

The reality is simple: cloud costs rarely explode overnight. They increase when pipelines are built without thinking about efficiency, scalability, and long-term usage.

Good data engineering is not just about making pipelines run.

It’s about making them run smartly, efficiently, and responsibly.

Why Cloud Costs Increase So Quickly

Cloud pricing works on a pay-for-usage model. The more you store, process, and move data, the more you pay. While this flexibility allows businesses to scale easily, it also requires careful planning and monitoring.

Costs usually grow because of:

- Reprocessing the same large datasets repeatedly

- Running pipelines more often than business needs require

- Storing duplicate or unused data

- Using oversized compute resources for small workloads

- Lack of regular monitoring

- Poor data lifecycle management

- Inefficient data transformation logic

Individually, these seem small.

Together, they quietly build a large bill.

Another common reason is the rapid adoption of new tools and services without cost governance policies. When teams experiment or scale workloads without visibility into consumption patterns, costs increase unexpectedly.

A Simple Way to Understand It

Think of cloud usage like water at home.

If a tap is left open, water keeps flowing and the bill keeps rising. Not because water is expensive but because it’s unmanaged.

Cloud pipelines behave the same way.

When jobs run unnecessarily or data is stored without cleanup, costs continue to flow.

Control the usage, and you control the cost.

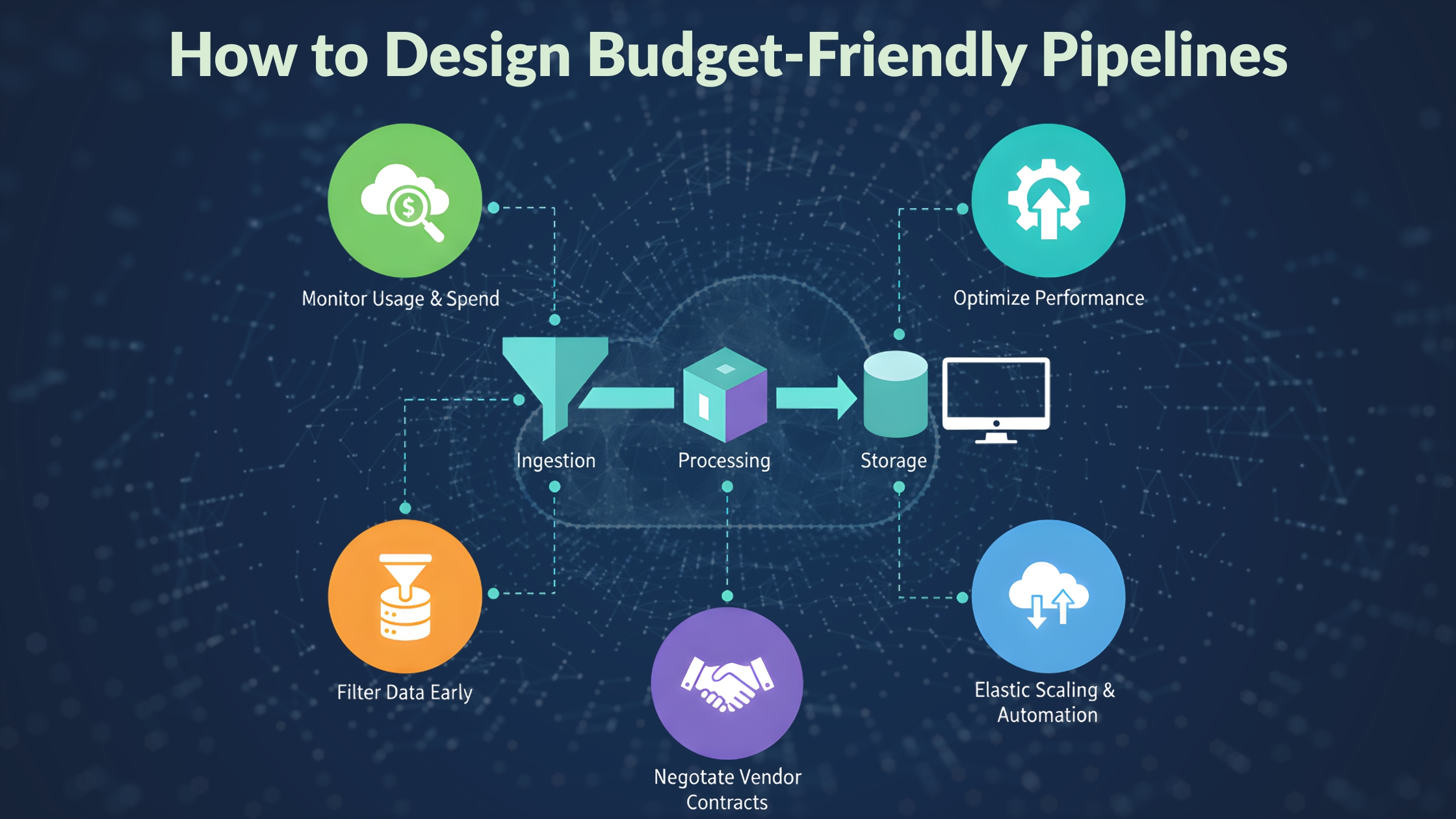

How to Design Budget-Friendly Pipelines

Process Only What Changed

Instead of moving full datasets every time, move only new or updated data. Incremental and change data capture (CDC) processing significantly reduces compute and data transfer costs.

Store Data Wisely

Keep frequently used data in high-performance storage and move older or less frequently accessed data to lower-cost storage tiers. Implementing automated data tiering strategies helps balance performance and cost.

Run Pipelines with Purpose

If daily updates are enough, hourly runs only increase costs without adding value. Align pipeline frequency with actual business requirements.

Monitor Usage Regularly

Tracking usage helps catch unusual spikes early. Budget alerts, cost dashboards, and monitoring tools provide visibility and prevent unexpected expenses.

Right-Size Compute

Large machines are not always better. Choose compute resources that match workload requirements and scale dynamically when needed.

Optimize Data Transformation

Poorly designed transformation logic can increase processing time and compute usage. Efficient queries, optimized joins, and partitioning strategies reduce processing costs.

Automate Resource Shutdown

Idle clusters, test environments, and unused compute resources often continue running unnoticed. Automating shutdown policies helps eliminate unnecessary expenses.

The Practical Mindset

Strong data engineers don’t just ask, “Will this work?”

They also ask:

- Do we really need this much data movement?

- Is this pipeline running too often?

- Are we storing data that no one uses?

- Are we paying for idle or underutilized resources?

- Can this transformation be optimized?

These small questions protect budgets over time and improve system performance.

Business Value of Cost Control

When cloud costs are managed well:

- Budgets stay predictable.

- Projects deliver better ROI

- Teams scale with confidence

- Leadership trusts data investments

- Resources can be reinvested into innovation.

- Data platforms remain sustainable and scalable.

- Cost awareness does not limit innovation; it makes innovation sustainable and strategically aligned with business goals.

The Role of Governance and FinOps

Modern organizations are adopting FinOps (Financial Operations) practices to manage cloud spending effectively. FinOps encourages collaboration between engineering, finance, and business teams to monitor, analyze, and optimize cloud usage continuously.

Strong governance policies ensure:

- Clear cost ownership

- Transparent usage tracking

- Standardized resource deployment

- Controlled scaling of workloads

This structured approach helps organizations maintain financial discipline while supporting rapid data growth.

Conclusion

Cloud is a powerful tool, but it works best with discipline.

Design pipelines carefully.

Move data wisely.

Monitor usage regularly.

Optimize continuously.

Because the goal is not just to build pipelines that run but pipelines that run efficiently, scale responsibly, and deliver long-term business value without draining the budget.